Dec

Can AI Replace Human Respondents In Qualitative Research?

jerry9789 0 comments artificial intelligence, Brand Surveys and Testing, Brandview World, Burning Questions

Like most industries these days, market research is no stranger to AI with its broad applications including the employment of synthetic respondents, which are individual profiles constructed by Large Language Models (LLMs) from real or simulated data. They offer fast, cheap, and scalable synthetic data that closely mimics how human participants would respond, a boon for quantitative research. But can synthetic respondents be just as effective in qualitative research? Can AI-powered profiles fully take over the role of human respondents in market research?

Image: Diana

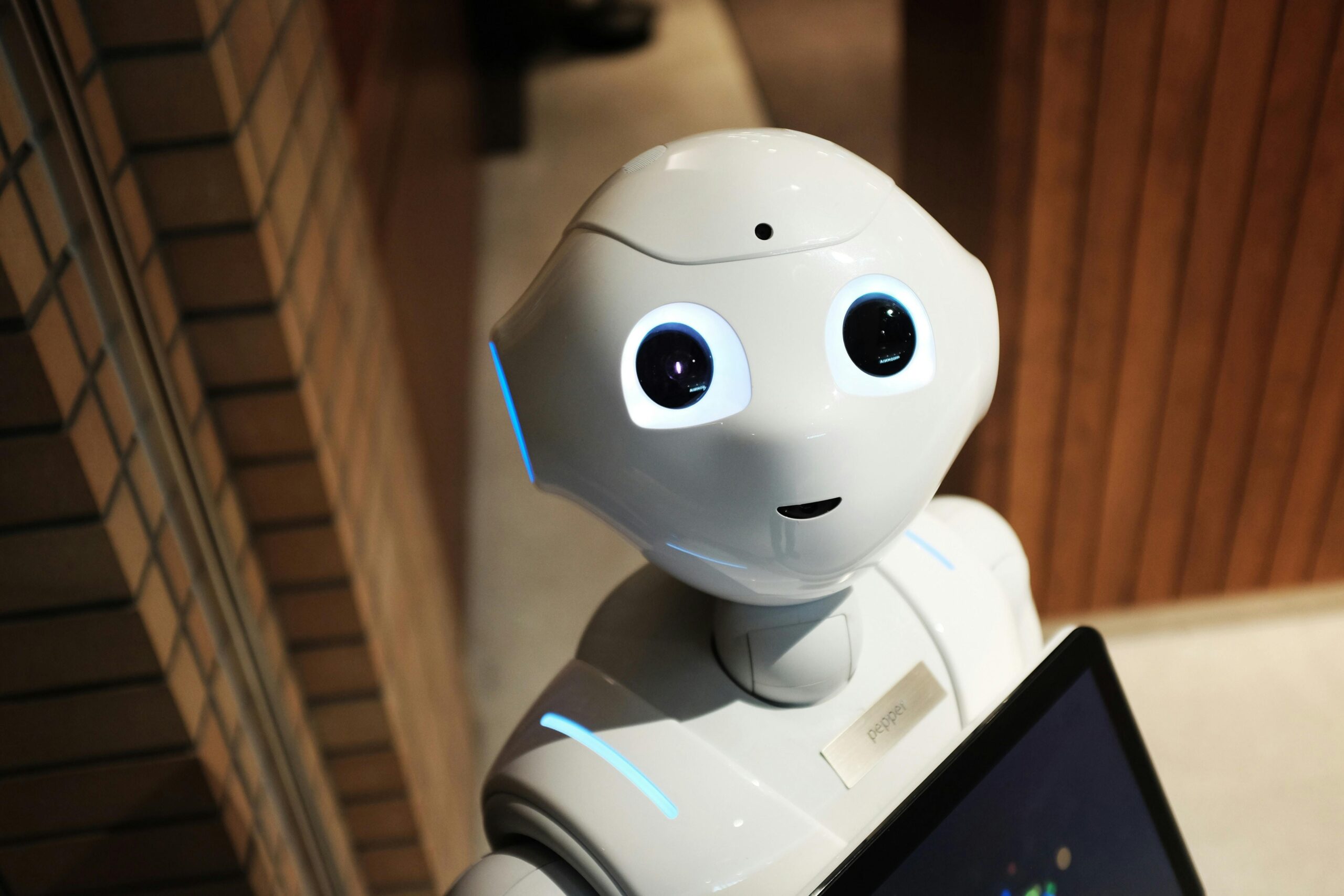

Synthetic Respondents and Qualitative Research

L&E Research recently hosted a webinar sharing their findings and observations testing synthetic respondents across a variety of qualitative research tasks. They shared that AI characteristically produces quick, structured, and consistent surface-level insights. It does well with detecting macro trends in usage or preferences, concept screening if you need to compare multiple ideas at scale, and spot issues with survey testing. It is also capable of gap-filling or simulating missing segments from known data, as well as bulk analysis for summarizing large open-ends quickly.

The key takeaway L&E found is that AI can describe what people do, but it falls short of telling why people do it. AI fundamentally excels in following patterns, but it would struggle with finding out the emotional driver, the motivation behind certain responses. AI can match logic but it won’t be able to fill in tone, nuance nor context like human insight and experience can.

Most AI models are also built on public data and may not have access to knowing how real people would respond to certain questions. When the engineers tried to influence AI agents in the direction of how real participants would respond, it rejected this notion and firmly stood by the perspective formed from the vastness of public data.

Additionally, AI can be absolutely and confidently wrong. Synthetic data can look convincingly human but since AI relies on patterns instead of experience, the air of confidence it puts up doesn’t guarantee accuracy.

Of course, the hosts added a disclaimer that this is where synthetic respondents are at right now, as no one could tell how things could possibly be so much different in the years to come. But the continued utilization of AI in market research- or any other industry, for that matter- is inevitable thanks to the operational and executionary efficiency it grants, and that is enough reason to continue studying and developing synthetic respondents.

Image: Ron Lach

Why The Human Factor Matters

In market research, emotions matter and context counts. AI can prove to be a powerful partner but it is no replacement for lived insight or validation. Human researchers are simply going to remain essential.

AI’s inherent structure and consistency is representative of its pursuit of perfection; however, humans aren’t perfect, nor simple. Humans are emotional and oftentimes, irrational. AI participants would respond based on their perfect approximation of how a human being would, but the synthetic logic behind that would be narrower and more consistent, as it discounts the fact that humans are imperfect.

Humans also bring incredible complexity and a broader range of perception to the table. We can contradict ourselves, and this would be natural. One human participant’s perception and experiences could inform the difference in how they respond from the next, while synthetic data would be uniformly shaped by congruence and invariability, no matter how much effort or work is put into making AI come close to mimicking humanlike responses.

The complexity, variability, and randomness of human nature is desirable in qualitative research. The engineers recognized this and cautioned about overly guiding or influencing randomness in AI that it “will hard-code your picture of randomness to the point where it is no longer random.”

AI can quickly give you bulk analysis but you might not want to rush in bringing it to your stakeholders, as they would question and challenge the quality and reliability of synthetic data. Human insight continues to be vital and irreplaceable when it comes to trust, nuance, and real-world complexity in market research.

Image: Kathrine Birch

The Hybrid Approach

At the end of it all, the hosts made a point that the webinar wasn’t meant to scare people away from synthetic data but rather bring a valid conversation on when it makes sense to take advantage or steer clear of AI-generated personas. In fact, they recommended utilizing a hybrid approach of employing virtual respondents and recruiting human participants, striking a delicate balance between synthesis and empathy.

Synthetic data would be great during the early exploratory stages of market research when you want to get an initial pulse check, something quick and good enough before getting people involved. But once you’re at the point when you need to uncover the emotional driver behind responses and decisions, understand or predict behaviors, or even gain a bit more confidence and trust in your findings, that’s when you bring in your human respondents.

This all aligns not only with a recent growing trend of companies coming around from the AI hype of the last few years but also with our stance on the appropriate use of AI, where we advocate for the responsible and ethical use of artificial intelligence. Instead of handing AI complete reins over all aspects of a business- or in this case, all stages of research work- we at Cascade Strategies encourage the thoughtful and practical application of artificial intelligence in combination with or enhanced by human experience, values and discretion.

To find out how our brand of inspired and enlightened human thinking can help you with your market research needs, please contact us here.

Additional Reading:

Can Synthetic Respondents Take Over Surveys?

Featured Image: Darlene Anderson

Top Image: Michelangelo Buonarroti

Nov

Are We Seeing The Start Of The AI Pullback?

jerry9789 0 comments artificial intelligence, Burning Questions

What Is AI Pullback?

In these last few years, we’ve all heard nothing but the revolutionary and transformative influence of Artificial Intelligence not only in the mainstream consciousness but also in various industries. A mixture of excitement and anxiety, we’ve collectively marveled at what Generative AI could produce or emulate in little to no time at all while grasping at the notion of what all this automation means for the human workforce and talent. However, the tone appears to be shifting these past few months with data showing large companies’ adoption of AI on the decline.

As reported in Apollo, a biweekly US Census Bureau survey of 1.2 million firms revealed a downward trend in AI utilization for companies with more than 250 employees. Falling from about 13.5% in June to under 12% in August, it’s the largest decline for AI adoption since the survey started in November 2023. Mid-sized companies or firms with less than 250 employees but more than 19 workers showed decreasing or stagnating AI adoption. It’s only with small companies with less than four employees that demonstrated a slight increase in AI usage.

New reports might help shed light on the AI pullback, such as a recent one from MIT indicating that 95% of AI pilot programs failed to boost company revenues or productivity. MIT’s findings were based on reviews of over 300 public corporate AI usage, surveying 350 employees, and talking with 150 industry leaders.

A recent study by METR revealed that developers surprisingly took 19% longer to complete issues when using AI coding tools than without. Yet despite actually experiencing slowdown, the developers still believed AI sped them up by 20%. This gap between developers’ perception and reality is representative of these past few years with the hyped up implementation of AI into everything software-related and unrestrained confidence in the hot new tech in spite of the unfavorable results.

IT Consultancy Gartner also attempted to quantify how much work AI agents get wrong when it “hallucinates.” They found that generative AI performs office tasks wrong a staggering 70% of the time. With that much error, human oversight becomes a necessity and in some cases, objectives would’ve been served much better had the task been assigned to a person instead of a machine.

Image: Mathias Reding

Is AI Pullback A Sign Of AI Adoption Maturity?

On a different note, the MTLC wrote that the AI pullback might seem like a slowdown but it could actually be a correction or calibration, as is the natural progression with any emerging tech’s usage. They pointed out that the same MIT report accounted for over 90% of employees using AI tools, no matter the company stance with AI. That these workers would utilize AI just so they can perform their tasks more efficiently and faster. And that this is another way at looking at AI adoption- “bottom-up, not just top down.”

It’s not that AI isn’t viable, but rather “the projects weren’t scoped, aligned, or designed for outcomes.” 95% of AI projects fail not because of the technology but because of how companies approach AI adoption.

AI pullback is signaling the transition from overenthusiasm to realistic expectations, from experimentation to production. For enterprise businesses to succeed with AI, they would need to identify which models, tools and processes work, which projects to invest in, and where AI would deliver the most value.

Image: Andrea Piacquadio

Cascade Strategies’ Approach To AI

The promise of increased production and revenue had led to companies to replace or cease hiring human workers in favor of AI during the onset of its mainstream popularity. Now that AI pullback is happening, companies have begun hiring human workers again not only to oversee but fix or improve sloppy AI outputs.

Cascade Strategies has always approached AI not just merely as a tool but as an augmentation and extension of human intelligence and talent. Yes, AI is a very powerful and promising technology but we recognize early on that on its own, it is gravely limited to the datasets its fed and their quality, the eventual gaps providing the perfect breeding ground for “hallucinations,” each iteration degrading and becoming less of what was before. We’ve always seen human intervention and guidance as essential for AI to maximize its potential; with the AI pullback, more and more companies are on the brink of discovering this incredible synergy between human and artificial intelligence.

Powered by AI, research becomes scalable and cost-effective by being applicable in all facets of the business and not just flagship projects; human oversight amplifies all that productivity and efficiency by unlocking innovative, resonant and actionable insights.

If you would like to learn more about how our human-centered market research work can benefit you or your company, feel free to contact us here.

Image: cottonbro studio

Additional Reading:

AI Pullback Has Officially Started – Will Lockett, medium.com Oct. 22, 2025

Data Shows That AI Use Is Now Declining at Large Companies – Joe Wilkins, futurism.com Sep. 8, 2025

AI adoption slows among big firms, U.S. data shows – Jullianna Anne Briones, tech.co Sep. 18, 2025

US Census Bureau: AI Adoption Has Declined for Large Companies – Conor Cawley, Sep. 11, 2025

AI adoption rate is declining among large companies — US Census Bureau claims fewer businesses are using AI tools – Hassam Nasir, tomshardware.com Sep. 8, 2025

Featured Image: MrWashingt0n

Top Image: Thirdman

Apr

AI’s Impact On Critical Thinking and Learning – What Studies Are Saying So Far

jerry9789 0 comments artificial intelligence, Burning Questions

Generative AI and Critical Thinking

On our last blog, we touched on two studies suggesting that Generative AI is making us dumber. One of those studies, which was published in the journal Societies, aimed to look deeper into GenAI’s impact on our critical thinking by surveying and interviewing over 600 UK participants of varying age groups and academic backgrounds. The study found “a significant negative correlation between frequent AI tool usage and critical thinking abilities, mediated by increased cognitive offloading.”

Cognitive offloading refers to the utilization of external tools and processes to simplify tasks or optimize productivity. Cognitive offloading has always raised concerns over the perceived decline of certain skills — in this instance, the dulling of one’s critical thinking. In fact, the study found that cognitive offloading was worse with younger participants who demonstrated higher reliance on AI tools and less aptitude when it comes to their own critical thinking skills.

Conversely, participants with higher educational backgrounds showed better command of their critical thinking no matter the degree of AI usage, putting more confidence in their own mental acuity than the AI-based outputs. Aligning with our advocacy for the “appropriate use of AI,” the study emphasizes the importance and appreciation of high-level human thinking over thoughtless and unmitigated adoption of AI technology.

Copyright: jambulboy

Generative AI and Learning

In truth, a number of earlier studies have revealed that the arbitrary adoption of AI tools can be detrimental to one’s ability to learn or develop new skills. A 2024 Wharton study on the impact of OpenAI’s GPT-4 demonstrated that unmitigated deployment of GenAI fostered overreliance on the technology as a “crutch” and led to poor performance when such tools are taken away. The field experiment involved 1,000 high school math students who, following a math lesson, were asked to solve a practice test. They were divided into three groups, with two of these groups having access to ChatGPT while the third had only their class notes. One group of students with ChatGPT performed 48 percent better than those without; however, a follow-up exam without the aid of any laptop or books saw the same students scoring worse by 17 percent than their peers who had only their notes.

What about the second group with the GenAI tutor? They not only performed 127 percent higher than the group without ChatGPT access on the first exam, but they also scored close to the latter during the follow-up exam. The difference? Sometime down the line of their interactions, the first group with ChatGPT access would prompt their AI tutor to divulge the answers, resulting in an increased reliance on GenAI to provide the solutions instead of making use of their own problem-solving abilities. On the other hand, the other group’s AI tutor version was customized to be closer to how real-world and highly effective tutors would interact with students: it would help by giving hints and providing feedback on the learner’s performance, but it would never directly give the answer.

Similar tests with a GenAI tutor in 2023 studied the same issue of AI dependence and the value of careful deployment of AI tools. Khanmigo, a GenAI tutor developed by Khan Academy, was voluntarily tested by Newark elementary school teachers, who belong to the largest public school system in New Jersey. They came back with mixed results, with some complaining that the AI tutor gave away answers, even incorrect ones in some cases, while others appreciated the bot’s usefulness as a “co-teacher.”

Other studies regarding the effectiveness of AI tutors have shown increases in learning and student engagement. These studies have also shown that GenAI can help reduce the time it takes to get through learning materials compared to traditional methods. One study that extolled the benefits of GenAI tutors involved Harvard undergraduates learning physics in 2024, and similar to the third group in the Wharton research, the AI was prevented from directly providing the answer to students. It would guide the student throughout the learning process one step at a time, providing incremental updates of the student’s progress, but never outright telling them the answer. There are merits to the idea of Generative AI as a teaching assistant, but it serves students better when it is positioned to engage one’s attention and abilities rather than induce dependence on it to generate the answers.

Copyright: Only-shot

Can We Use GenAI Without Making Us Dumber?

These studies shed light on how we should approach AI solutions and development, whether the end product is being deployed in learning, productivity or other relevant applications. Beyond thoughtful planning and considerations on how AI tools would be deployed, there should be a focus on engaging the human faculties involved, with safeguards empowering man throughout the entire process instead of letting the machine take over the process wholesale. AI technology is developing rapidly, but we can keep pace and remain reasonable as long as human engagement and empowerment is kept at the core of its utilization and adoption.

Amid contemporary fears that anyone could be replaced anytime by AI, these studies highlight the importance of how vital and interconnected the human factor is to the effective deployment and development of AI tools. One could be content with the constant and consistent output AI tools generate, but progress is only possible when competent human minds are involved in the process and direction. Students can easily find answers with AI tools at their disposal, but why not advance their understanding of how solutions are formed with engaging and relatable AI-powered educational experiences? High-level human thinking grounded by values and experience can’t be replicated by machines, and perhaps there’s no better time than now to incorporate it into the heart of the AI revolution.

While AI development hopes that optimization and automation free the human mind to go after bigger and more creative pursuits, we here at Cascade Strategies simply hope that humanity emerges from all of these advancements more and not less than what it was when we entered the AI revolution.

Additional Reading:

Why AI is no substitute for human teachers – Megan Morrone, Axios

AI Tutors Can Work—With the Right Guardrails – Daniel Leonard, Edutopia

Featured Image Copyright: jallen_RTR

Top Image Copyright: danymena88

Mar

Are We Getting Dumber Because of AI?

jerry9789 0 comments artificial intelligence, Burning Questions

Is Generative AI making us dumber? Two recent studies suggest so.

A study published early this year titled “AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking” showed that growing dependence on AI could lead to a decline in critical thinking. Submitted by Michael Gerlich of the SBS Swiss Business School, the study was based on surveys and interviews of 666 UK participants from different age groups and academic backgrounds. The problem is more pronounced with younger participants who demonstrated increased reliance on AI to perform routine tasks and scored lower when it comes to critical thinking than their older counterparts.

More recently, a study by Microsoft and Carnegie Mellon University shared similar findings that the more workers depended on AI for their work, the duller their critical thinking becomes. The study surveyed 319 knowledge workers who used generative AI at least once a week and examined how and when they apply AI or their critical skills when performing tasks. The more faith the participant put in genAI to produce acceptable outcome, the less they use their critical thinking skills. On the other hand, participants who have higher confidence in their abilities than that of AI’s are found to exercise their critical thinking more out of concerns over unintended and overlooked machine output.

Copyright: Tara Winstead

What is Cognitive Offloading?

Both studies are linking overreliance on AI with cognitive offloading, which is when someone utilizes external tools or processes for completing tasks, resulting in their reduced engagement with deep, reflective thinking. Yes, AI is improving efficiency and saves time and financial costs, but these studies are suggesting that it could make humans less smart over time.

However, cognitive offloading isn’t new as it existed in a variety of forms throughout time, such as using a calculator instead of performing mental mathematics or simply making a grocery list instead of memorizing all the items you need to buy. It’s no surprise then that there are questions about the merits of the studies, such as self-reporting bias or how critical thinking was measured. Forbes suggests that AI isn’t making us dumb but lazy, while another emphasizes that in order for there to be harm to one’s critical thinking abilities, one must have critical thinking to begin with.

Copyright: Pavel Danilyuk

Rethinking AI Development

Nevertheless, these studies contribute to the conversation regarding the direction of genAI development, now with the nuance of being mindful and respectful of its human users’ intelligence and faculties. Recommendations include rethinking AI designs and processes which incorporates and engages human critical thinking. They’re helping bring back focus to AI serving as a tool augmenting instead of overtaking human capabilities.

For us at Cascade Strategies, we’re glad that these studies have renewed awareness and appreciation of human intelligence and creativity. Our world could’ve easily devolved into settling for more of the same output so it pleases us to learn that more voices are becoming advocates and proponents not only of the “appropriate use of AI” but also of high level human thinking.

Featured Image Copyright: Alex Knight

Top Image Copyright: Tara Winstead

Aug

The Future Is Here

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

With just 22 words, we are ushered into a future once heralded in science fiction movies and literature of the past, a future our collective consciousness anticipated but has now taken us by surprise upon the realization of our unreadiness. It is a future where machines are intelligent enough to replicate a growing number of significant and specialized tasks. A future where machines are intelligent enough to not only threaten to replace the human workforce but humanity itself.

Published by the San Francisco-based Center for AI Safety, this 22-word statement was co-signed by leading tech figures such as Google DeepMind CEO Demis Hassabis and OpenAI CEO Sam Altman. Both have also expressed calls for caution before, joining the ranks of other tech specialists and executives like Elon Musk and Steve Wozniak.

Earlier in the year, Musk, Wozniak, and other tech leaders and experts endorsed an open letter proposing a six-month halt on AI research and development. The suggested pause is presumed to allow for time to determine and implement AI safety standards and protocols.

Max Tegmark, physicist and AI researcher at the Massachusetts Institute of Technology and co-founder of the Future of Life Institute, once held an optimistic view of the possibilities granted by AI but has now recently issued a warning. He remarked that humanity is failing this new technology’s challenge by rushing into the development and release of AI systems that aren’t fully understood or completely regulated.

Henry Kissinger himself co-wrote a book on the topic. In The Age of AI, Kissinger warned us about AI eventually becoming capable of making conclusions and decisions no human is able to consider or understand. This is a notion made more unsettling when taken into the context of everyday life and warfare.

Working With AI

We at Cascade Strategies wholeheartedly agree with this now emerging consensus and additionally, we believe that we’ve been obedient in upholding the responsible and conscientious use of AI. Not only have we long been advocating for the “Appropriate Use” of AI, but we’ve also made it a hallmark of how we find solutions for our client’s needs with market research and brand management.

Just consider the work we’ve done with the Expedia Group. For years, they’ve utilized a segmentation model to engage with their lodging partners by offering advice that could lead to the partner winning a booking over a competitor. AI filters through the thousands of possible recommendations to arrive at a shortlist of the best selections optimized for revenue.

With the continued growth and diversification of their partners, they then needed a more effective approach in engaging and appealing to them, something that focuses more on that associate’s behavior and motivations. We came up with two things for Expedia: a psychographic segmentation formed into subgroups based on patterns of thinking, feeling, and perceiving to explain and predict behavior, and more importantly, a Scenario Analyzer that utilizes the underlying AI model but now delivers recommendations in very action-oriented and compelling messaging tailor-fit for that specific partner.

The best part about the Scenario Analyzer is whether the partner follows any of the advice recommended or does nothing, Expedia still stands to make a profit while maintaining an image of personalized attentiveness to their partner’s needs. And ultimately, it’s the partner who gets to decide, not the AI.

Copyright Tara Winstead

Our Future With AI

This is how we view and approach AI- it’s not the end-all, be-all solution but rather an essential tool in increasing productivity and efficiency in tandem with excellent human thinking, judgment, and creativity. Yes, it is going to be part of our future but in line with the new consensus, we believe that AI shaped by human values and experience is the way to go with this emerging and exciting technology.

Aug

How Can Healthcare Companies Identify Who Needs Remediation Programs?

jerry9789 0 comments artificial intelligence, Burning Questions

What Is Remediation?

The Cambridge Dictionary defines remediation as “the process of improving or correcting a situation.” Remediation programs are commonly employed in teaching and education wherein they address learning gaps by reteaching basic skills with a focus on core areas like reading and math. And as pointed out in an understood.org article, remedial programs are expanding in many places in our post-COVID 19 world.

In healthcare, there’s a wide range of remediation programs, or “remedial care,” diversified based on their end goal which may include smoking cessation, anti-obesity, weight reduction, diet improvement, exercise, heart-healthy living, alcoholism treatment, drug treatment, and more. But how do you identify the people who need remedial care the most?

Who Needs Remediation?

You might say you can tell who needs remedial care by just looking at the physical aspect of the prospective patient, but this is a shortsighted answer to the question. And what about those who need remedial care for a heart-healthy lifestyle? Surely you can’t tell a likely candidate for this remediation program with just one look alone.

It goes deeper than that. What if you, a healthcare representative, could only devote remedial care to a select few individuals given limited resources and time but you want to make sure that the whole remediation program is successful by achieving its intended goals? Just imagine all that time, effort and resources spent only for the patient to relapse back into their old ways not too long after program completion- or even in the middle of the remediation process itself.

Deep Learning and Remediation

This is where deep learning comes in. Also known as hierarchical learning or deep structured learning, Health IT Analytics defines deep learning as a type of machine learning that uses a layered algorithmic architecture to analyze data. In deep learning models, data is filtered through a cascade of multiple layers, with each successive layer using the output from the previous one to inform its results. Deep learning models can become more and more accurate as they process more data, essentially learning from previous results to refine their ability to make correlations and connections.

Deep learning models handle and process huge volumes of complex data through multi-layered analytics to provide fast, accurate, and actionable results or insights. When applied to the scenario we mentioned beforehand, deep learning filters through that multitude of patient data and prioritizes those who need remedial care the most.

You can also align its findings to effectively identify individuals who will not only return monetary value to your healthcare brand, but at the same time are most likely to “engage” or participate in programs offered by your company, such as wellness, diet, fitness or exercise. They can also be the best people to commit to avoiding poor lifestyle choices, such as overeating, smoking, and alcohol, helping guarantee the success of the remediation program.

With a combination of three decades of market research experience and conscientious use of AI, Cascade Strategies has been helping healthcare organizations develop advanced models to handle, filter and identify the likeliest of candidates for their program purposes. Cascade Strategies helps industry professionals not only recognize their ideal customers but also reach out to them with some of the most effective and award-winning marketing campaigns, thanks to our array of services such as Brand Development Research and Segmentation Studies. To see more examples of how we help leading worldwide companies achieve their goals, please visit our website.

Here are some of our suggestions for further reading on deep learning and healthcare:

https://builtin.com/artificial-intelligence/machine-learning-healthcare

https://research.aimultiple.com/deep-learning-in-healthcare/

https://healthitanalytics.com/features/types-of-deep-learning-their-uses-in-healthcare

Aug

The Impact of AI

In The Age of AI, which Henry Kissinger co-wrote with Eric Schmidt and Daniel Huttenlocher, Kissinger tried to warn us that AI would eventually have the capability to come up with conclusions or decisions that no human is able to consider or understand. Put another way, self-learning AI would become capable of making decisions beyond what humans programmed into it and base such conclusions on what it deems the most logical approach, regardless of how negative or devastating the consequences can be.

A common example to illustrate this point is how AI had already transformed games of strategy like chess, where given the chance to learn the game for itself instead of using plays programmed into it by the best human chess masters, it executed moves that have never crossed the human mind. And when playing with other computers that were limited by human-based strategies, the self-learning AI proved dominant.

When applied to the field of warfare, this could possibly mean AI proposing or even executing the most inhumane of plans regardless of human disagreement simply because it considers such a decision the most logical step to take.

The Influence of AI

As part of Kissinger’s warning, it’s been noted just how far-reaching AI’s influence already is in modern life, especially with its usage in innocuous things such as social media algorithms, grammar checkers, and the much-hyped ChatGPT. With the growing dependency on AI, there runs the risk of human thinking being eclipsed by machine-based efficiency and effectiveness. And how it arrives at such efficient and effective decisions becomes questionable because it could become difficult or near impossible to trace what it has learned along the way.

Just imagine someone making a decision influenced by the information fed to them by AI and yet failing to rationalize the thinking behind such a decision. That particular human may not realize it, but at that point they’re living in an AI world, where human decision-making is imitating machine decision-making rather than the reverse. It was this interchangeability Alan Turing was referring to with his famous postulate about artificial intelligence — the so-called “Turing Test” — which holds that you haven’t reached anything that can be fairly called AI until you can’t tell the difference.

Copyright Pavel Danilyuk

Appropriate Use of AI

However, it’s been pointed out that the book doesn’t follow “AI fatalism,” a common belief wherein AI is inevitable and humans are powerless to affect this inevitability. The authors wrote that we are still capable of controlling and shaping AI with our human values, its “appropriate use” as we at Cascade Strategies have been advocating for quite some time. We have the opportunity to limit or restrain what AI learns or align its decision-making with human values.

Kissinger had sounded the warning while others had already made calls to start limiting AI’s capabilities. We are hopeful that in the coming years, with the best modern thinkers and tech experts at the forefront, we progress to more of an AI-assisted world where human agency remains paramount instead of an AI-dominated world where inscrutable decisions are left up to the machines.

Jul

What To Make Of ChatGPT’s User Growth Decline

jerry9789 0 comments artificial intelligence, Burning Questions, Uncategorized

The Beginning Of The End?

More than six months after launching on November 2022, ChatGPT recorded its first decline in user growth and traffic in June 2023. Spiceworks reported that the Washington Post surmised quality issues and summer breaks from schools could have been factors in the decline, aside from multiple companies banning employees from using ChatGPT professionally.

Brad Rudisail, another Spiceworks writer, opined that a subset of curious visitors driven by the hype over ChatGPT could’ve also boosted the numbers of early visits, the dwindling user growth resulting from the said group moving on to the next talk of the town.

The same article also brings up open-source AI gaining ground on OpenAI’s territory as a possible factor, thanks to customizable, faster, and more useful models on top of being more transparent and the decreased likelihood of cognitive biases.

Don’t Buy Into The Hype

But perhaps the best takeaway is Mr. Rudisail’s point that we’re still in the early stages of AI and it’s premature to herald ChatGPT’s downfall with a weak signal like decreased user growth. For all we know, this is what could be considered normal numbers, with earlier figures inflated by the excitement surrounding its launch. Don’t buy into the hype is a position we at Cascade Strategies advocate when it comes to matters of AI.

The advent of AI has taken productivity and efficiency to levels never seen before, so the initial hoopla over it is understandable. However, we believe people are now starting to become a little more settled in their appraisal of AI. They’re starting to see that AI is pretty good at “middle functions” requiring intelligence, whether that be human or machine-based. But when it comes to “higher function” tasks which involve discernment, abstraction and creativity, AI output falls short of excellence. Sometimes mediocrity is acceptable, but for most pursuits excellence is needed.

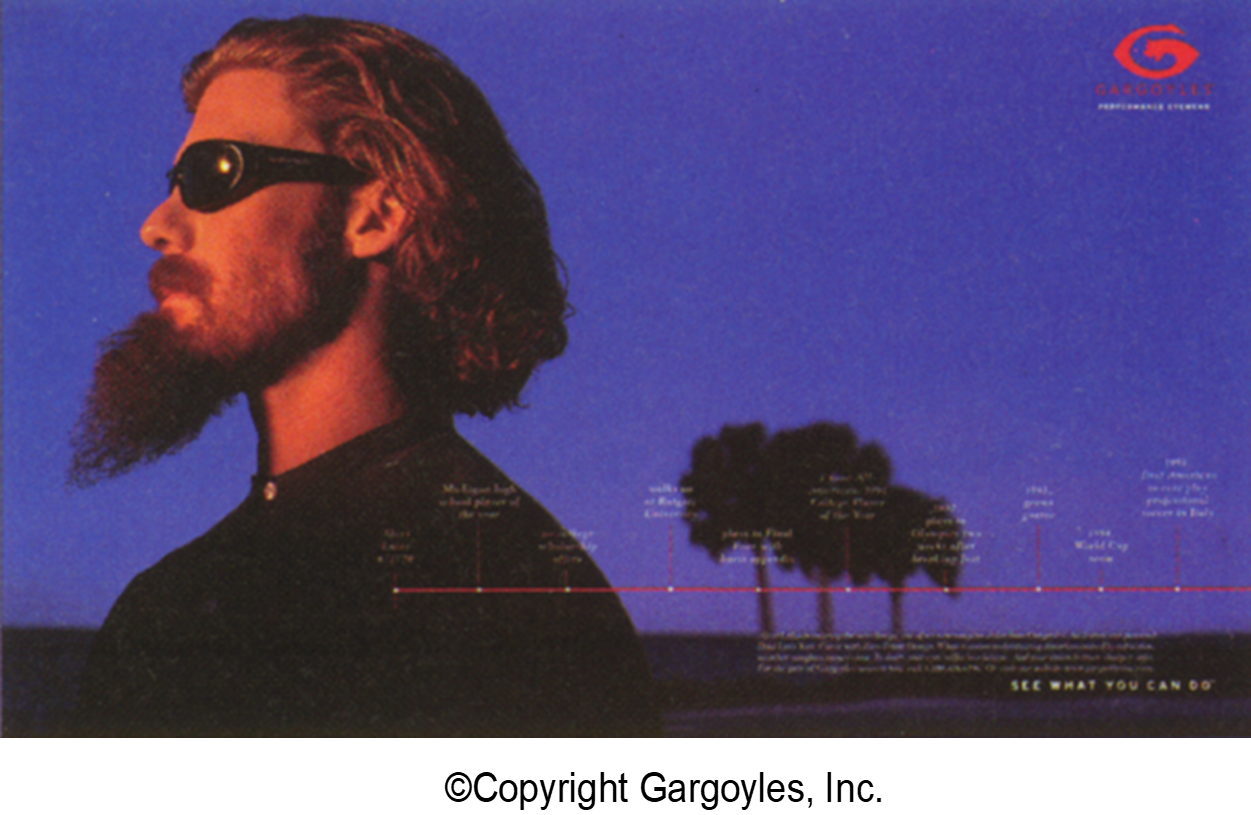

Excellence Achieved Through High Level Human Thinking

To illustrate just how AI would come out lacking in certain activities, let’s consider our case study for the Gargoyles brand of sunglasses. ChatGPT can produce a large number of ads for sunglasses at little or no cost, but most of those ads won’t bring anything new to the table or resonate with the audience.

However, when researchers spent time with the most loyal customers of Gargoyles to come up with a new ad, they discovered a commonality that AI simply did not have the power to discern. They found a unique quality of indomitability among these brand loyalists: many of them had been struck down somewhere in their upward striving, and they found the strength and resolve to keep going while the odds were clearly against them. They kept going and prevailed. The researchers were tireless in their pursuit of this rare trait, and they stretched the interpretive, intuitive, and synthesis-building capacities of their right brains to find it. Stretching further, they inspired creative teams to produce the award-winning “storyline of life” campaign for the Gargoyles brand.

All told, this is a story of seeking excellence, where hard-working humans press the ordinary capacities of their intellects to higher layers of understanding of a subject matter, not settling for simply a summarization of the aggregate human experience on the topic. This is what excellence is all about, and AI is not prepared to do it. To achieve it, humans have to have a strong desire to go beyond the mediocre. They have to believe that stretching their brains to this level results in something good.

How To Make “Appropriate Use” of AI

But that is not to say that AI and high level human thinking can’t mix. The key is to recognize where AI would best fit in your process and methodologies, then decide where human intervention comes in. This is what we call “Appropriate Use” of AI.

Take for example our case study for Expedia Group and how they engage with millions of hospitality partners. Expedia offers their partner “advice” which helps them receive a booking over their competitors. With thousands of pieces of advice to give their partners, they utilize AI to filter through all those recommendations and present only the best ones to optimize revenue. Cascade Strategies has helped them further by creating a tool called Scenario Analyzer, which uses the underlying AI model to automate the selection of these most revenue-optimal pieces of advice.

Either way, the end decision on which advice to go with (or whether they accept any advice at all) ultimately still comes from Expedia’s partner, not the AI.

Copyright ClaudeAI.uk

A Double-edged Sword

As you can see with ChatGPT, it’s easy to get carried away with all the hype surrounding AI. At launch, it was acclaimed for the exciting possibilities it represented, but now that it has hit a bump in the road, some people and outlets act as if ChatGPT is on its last leg. Hype is good when it’s necessary to draw attention; unfortunately in most cases, it sets up the loftiest of expectations when good sense gets overridden.

This is why we think a sensible mindset is the best way to approach and think about AI — to see it for what it really is. It’s a tool for increasing productivity and efficiency, not the end-all and be-all, as there is still much room for excellent human thinking backed by experience and values to come into play. Our concerns for now may not be as profound and dire as those expressed by James Cameron, Elon Musk, Steve Wozniak and others, but we’d like to believe that “appropriate use” of AI is the key towards better understanding and responsible stewardship of this emerging new technology.