Feb

Brand Health Tracking with LLM Equity (Part 1)

jerry9789 0 comments artificial intelligence, Brand Surveys and Testing, Brandview World

AI Is Disrupting The Shopper’s Experience

There’s a paradigm shift in the shopping process and AI is the driving force behind this change. Shoppers are no longer just searching online or scrolling through websites; they’ve now taken advantage of AI platforms to discover, compare, and even buy products in their behalf.

According to generative engine optimization (GEO) firm The Rank Collective, their analysis of cross-platform AI visibility data revealed that 64% of consumers are now using AI tools to discover and learn about new products, with frequent online shoppers increasing that share to 66%. ChatGPT serves as a starting point for 34% of these high-intent users.

Another study based on two multi-market surveys of 5,000 consumers aged 18-67 comprised of US, UK, Canadian and Australian residents reported that 41% of consumers trust Gen AI search results more than paid search results. That same study- the 2025 Consumer Adoption of AI Report- also found that only 15% trust AI less than search ads.

Additionally, Adyen’s Retail Report shared that 51% of shoppers are open to AI making purchases in their behalf. It also noted that the number of US shoppers using AI assistants rose from 12% to 35%. With these encouraging figures, 88% of retailers are considering adopting AI to handle the entire shopping process in the shopper’s behalf, with 56% of them prioritizing this technology for 2026.

Image: Google DeepMind

LLM Equity and Brand Building

AI has opened up a new world of fast and frictionless shopping experience. While still in its early stages of adoption, companies have begun exploring this new space to understand what challenges it would need to address in order to compete and thrive.

Perhaps a good starting point is understanding Large Language Models (LLM) equity. LLM equity generally refers to ensuring that AI models are fair, unbiased, and accessible across diverse populations, preventing the reinforcement of existing disparities. It requires addressing algorithmic bias in training data specifically with race, gender, and socioeconomic status, especially in the field of healthcare. It’s also concerned with expanding access and at the same time, performing in non-English languages and low-resource settings.

For brand building, LLM equity is more concerned with whether your brand shows up in Gen AI search results and how it’s being represented. What theme or themes are being represented by your brand? Are those themes coherently represented in your social media? Is your current brand representation connecting and engaging with your audience? Is that connection strong enough to not only move consumers to purchase your product but also engage with your content? Is your brand content strong enough to capture the interest and be remembered by prospective consumers?

In other words, understanding LLM equity in brand building is understanding and tracking your brand health.

Image: TyliJura

Featured Image: Shoper.pl

Top Image: Nataliya Vaitkevich

Feb

Can AI & Human Researchers Coexist In Market Research?

jerry9789 0 comments artificial intelligence, Brand Surveys and Testing, Burning Questions

AI In Market Research Today

With 90% of the world’s data created in just two years time between 2021 and 2023 and the global data volume standing at 149 zettabytes by 2024, it’s understandable why AI would be readily adopted by the market research industry. Traditional methods of data collection and analysis would hold a place in market research but they simply aren’t as powerful as AI when it comes to handling all that staggering volume of data. But is AI powerful enough to take the place of human researchers?

AI enables research teams to move, process and analyze massive datasets with speed and accuracy, efficiently handling all the repetition and scale involved in the research process. From drafting questionnaires to monitoring survey data quality, from analyzing open-ends to formulating dashboards and charts, AI fully automates the research process leading to faster and better decisions at a scale beyond the capabilities of human researchers.

But is AI the endgame for market research? Does it make human researchers obsolete?

Image: geralt

Cascade Strategies and AI

Cascade Strategies conducted a member perceptions study for a company looking to develop and implement a brand typology. The overall goal of the study was to help them better understand their different customer type’s overall motivations and aspirations for more effective engagement. As part of the study, we conducted an online survey with over 1,500 of their randomly selected members. We then utilized an AI-assisted Self-organizing Map (SOM) to run all the cases recursively, sometimes millions of times, until it optimizes the separations among the groups. The SOM produced a 6-group solution, with each group having a dominant passion that is served well or poorly by the company, ranging from proclivity for deals and new brands to yearning for customization and connection with other users.

The AI has done the heavy lifting of scanning all that dataset, surfacing themes, and summarizing the respondents. It has done enough to structure the story of each group but not enough tell or paint the whole picture.

This is where the human researchers at Cascade Strategies step in. We came up with names for each group that best described their dominant passion, names resonant enough that they not only convey an immediate idea of what they’re most passionate about but makes them fundamentally relatable even if one doesn’t necessarily share the same propensities: Shopper, Seeker, Learner, Sharer, Individualizer and Intellectual.

In isolation, each group achieves the study’s goal of guiding the company on the most effective way to engage with them. Their sum, however, grants the company an overview on how to improve and further develop its platform by considering and introducing new features that matter to one particular group, but would essentially benefit its membership base as a whole when implemented. For example, the Sharer would appreciate increased opportunities to connect and interact with other experts and enthusiasts of the same interests in the platform by making it easier to make reviews and share content.

AI surfaced all those patterns and signals from all that survey data, but it lacked the judgment and context to elevate it into a meaningful and coherent narrative. Human researchers, on the other hand, saw what story can be told from all those themes and by layering in human understanding, they’re able to tie them down to actionable business decisions.

Image: Christina Morillo

Leveraging AI In Market Research

So would AI replace human researchers? We’d like to frame our response to this question with the words of Joseph Weizenbaum, one of AI’s early researchers: “We can count, but we are rapidly forgetting how to say what is worth counting and why.”

Yes, AI is powerful enough to handle large amounts of data to identify patterns, cluster themes, and summarize respondents, but it generates outputs rather than insights. Outputs foster decisions rooted in logic and reasoning, but insights spring from judgment and context. Outputs can provide directions and surface themes from which stories can be framed, but insights take it one step further by asking what matters and why it matters, adding depth and resonance to the story.

In addition, Weizenbaum posits that computer programming can make decisions but it can’t ultimately choose. Just like insights, choosing requires judgment which takes in emotions, values and experience.

We at Cascade Strategies are among a growing number of proponents who believe that AI works best as a tool and extension of human intelligence and talents. AI strips the friction from manual, repetitive work without compromising methodological rigor and accuracy, but rather than adopting it for the sake of automation, we choose to see it as a freeing and empowering agent that enables researchers to focus more on interpreting data with the context of human understanding and values, translating insights into sensible and confident business decisions. Just as quantitative and qualitative research can coexist in the same study, we choose to live in a world where AI and human researchers work together towards the same goal of finding and crafting meaningful and relevant stories worth telling.

Image: Pavel Danilyuk

Featured Image: Ron Lach

Top Image: kc0uvb

Feb

What’s Happening Nowadays With Survey Samples? (Part 2)

jerry9789 0 comments artificial intelligence, Brand Surveys and Testing, Brandview World, Burning Questions

Why The MR Industry Should Start Collectively Caring About Data Quality

In his recent LinkedIn post, JD Deitch offered two explanations on why the sample market is what it is right now and has been for the past two decades: clients either don’t know or don’t care how bad the quality of data sample they’ve been receiving. Between not knowing and not caring, the latter is the more egregious of the two.

In Part 1 of this series, we touched on the challenges presently faced by the sample market: participant engagement, polling representivity and fraud as illustrated by the Op4G / Slice MR scandal. Of the three, fraud captures the most attention, the one that makes headlines, the one that stirs up the most discussion and calls for resolution. The threat of fraud is in everyone’s mind, and that’s why there are measures and protections in place and constantly being developed to detect and address it.

Fraud, however, may not be the key issue out of the three. In the Greenbook podcast, Deitch has pointed to participant engagement as a long-standing challenge that the industry has always been aware of and has tried multiple times to solve. Fraud has always been tagged with large sum of dollars lost or deceptively gained; what most don’t see or take into account is how much revenue or opportunity is missed because of bad or low quality data generated by poor engagement. Yes, fraud undermines credibility and trust in the industry but there always has been avenues to regain them; market failures due to poor data quality may not be as visible but the damage they create linger and influence. And that damage through the decades has now translated to the indifference clients feel towards sample data being produced. As Deitch puts it, “Most survey research projects just don’t matter enough for clients to demand better.”

The current product coming out of the sample market has been commoditized enough that they hardly affect business decisions. Clients don’t see enough value or endorse the same level of confidence in the present product to justify spending more to learn or find out what people are thinking. And if an alternative like AI comes along, clients are roused enough to spend and explore what the other options offer.

“Companies will always want to know what people think. That need isn’t going away.” And this is why renewed focused on the participant experience becomes key. Rather than settle for respondents who have time to fill out questions, find and attract people that are the most invested and involved in the subject matter. Incentivize them for their time and underscore why their thoughts and feelings matter. Connect and foster a healthy yet professional relationship with them. Encourage them to find or refer similar personalities. Build and maintain an engaged panel of quality participants.

New and emerging technology excites clients and investors so look into leveraging them into your methods and processes. Learn the best way to implement AI. Don’t simply deploy new tech to cut costs and time; discover where AI would complement human talent the most and where human supervision is most critical. Collaborate with tech people and developers to design and build systems and applications aligning with your goals and values.

The level of care and effort market research agencies put into their research work would always reflect in the end-product. At Cascade Strategies, we believe excellent and high quality data resonates, and we’re confident it will strike chords in clients to make them care enough. And when clients care enough, they’ll be willing to find out and demand more.

To learn more about how we leverage inspired human thinking with modern cutting edge technology to achieve high quality market research for our clients, please contact us here.

Image: Tumisu

Featured Image: F1Digitals

Top Image: Mohamed_hassan

Jan

What’s Happening Nowadays With Survey Samples? (Part 1)

jerry9789 0 comments artificial intelligence, Brand Surveys and Testing, Brandview World, Burning Questions

What is The Op4G / Slice MR Scandal?

Op4G (Opinions4Good) and its offshoot Slice were US-based market research companies whose senior leaders were indicted in April 2025 for selling fake market research over the course of a 10-year period, generating $10M in fraudulent revenue. While they marketed their business model of maintaining “a quality, engaged membership panel” of individuals eligible to participate in surveys, they began recruiting in 2014 certain individuals called “ants” to complete surveys to increase revenue despite producing fabricated market data. Companies that purchased survey data from Op4G or Slice between 2014 and 2024 are encouraged to contact the U.S. Attorney’s office.

The scheme opens up questions on how much these fraudulent market data has permeated the industry, especially when Op4G and Slice presented their survey findings as high quality backed by ISO certification. It brings to light the importance of upholding transparency and accountability in the market research industry despite the availability of certain shortcuts to cut cost and time.

Image: jesben

What is Enshittification?

The Op4G / Slice MR scandal is perhaps emblematic of the enshittification of platforms. Popularized by Canadian writer Cory Doctorow in a 2022 blog post, Wikipedia defines enshittification as “a process in which two-sided online products and services decline in quality over time.” JD Deitch, who cited in a Greenbook podcast Doctorow’s article as inspiration for writing his ebook, described enshittification as “what happens in platforms when they start to seek yield and profitability and growth.”

Together with Lenny Murphy on that Greenbook podcast, JD touched on how enshittification compounds the long-standing issues in the sample market when it comes to producing high quality and reliable market data: those of participant engagement and polling representivity. The participant experience has been neglected and treated as an afterthought by the industry for so long that attracting a wide and diverse pool of engaged and relevant respondents has remained a constant challenge. When participants aren’t incentivized enough to engage with the survey experience, the quality of the data and insights produced risk falling short of their true potential. And when you simply aren’t attracting enough respondents or even give a reason to change the minds of those who aren’t really inclined to participate in surveys, you’re missing out on the opportunity of tapping into subsets of the population that could’ve given new and interesting perspectives.

The emergence of AI exacerbates issues and attitudes towards the participant experience. When client companies have not just years but decades worth of survey data and studies, they could simply shift spending away from participant-driven research to developing AI that could produce synthetic data from their stock. And when research market companies don’t own or have access to such kind of survey information, desperate firms might resort to taking shortcuts like programmatic sampling or like in the case of Op4G and Slice, fraudulent means to generate survey data and revenue.

The quality of the synthetic data being produced from all that past data and studies comes to mind, too. Yes, it would depend on the quality of the training data Large Language Machines (LLMs) is fed. Excellent synthetic data would enable scaling and efficiency. However, excellent synthetic data would be tethered to the subject matter it excels on; deviation from the subject matter might produce less than desired outputs and far from potential breakthroughs or new discoveries. And despite AI’s best attempts to optimize based on what it was trained on, there’s also always the risk of it hallucinating. When one cares enough to understand, working or investing with flawed data is simply intolerable.

Image: Tumisu

Featured Image: andibreit

Top Image: Tima Miroshnichenko

Jan

Financial Services Sector Expanding Rapidly But There Will Be Growing Pains

jerry9789 0 comments artificial intelligence, Brand Surveys and Testing, Brandview World

SIS International says the outlook for the financial services sector is one of solid and consistent growth, expected to reach $47.55 trillion in 2029, but it is not without anxieties. They shared that around three-quarters of financial services executives on a recent survey expressed concerns over their institutions’ ability to navigate economic instability, adapt to emerging technologies and shifting regulations, as well as sustain existing revenue sources in the coming decade.

SIS points to five key trends where financial institutions could focus their research priorities at as the financial services landscape continues to develop and change:

- AI implementation: Despite high technology adoption rates, a good majority of banking customers struggle to trust AI applications.

- Digital Banks: More and more customers are switching to neobanks. Learning the reasons behind this growing preference would help traditional financial organizations reposition themselves while digital banks would benefit from these insights through sustainable growth and expansion.

- Mobile Banking: Digital channels have established themselves as the primary form of interaction between customers and their banks, and fostering engagement and a more personalized experience through research could lead to improved loyalty.

- Expanding Financial Services Options: Delving into growing technology-oriented fronts open up exploring new and additional avenues to offer financial services digitally.

- Addressing Security Concerns: Adopting security measures against fraud and privacy is just the start but targeted research would help recognize which protections build confidence and trust based on the concerns expressed by different customer segments.

High-quality market research would help financial institutions understand what would cause their customers to hesitate or quickly adapt to new technology or measures, the most effective way to communicate benefits and advantages, targeting the most receptive customer segment, and identifying new opportunities and channels, just to name a few.

Overall, effective market research into these five key insights should enable financial organizations to make confident and strategic decisions aligned with business goals.

Image: Audy of Course

Featured Image: Tima Miroshnichenko

Top Image: Jakub Zerdzicki

Dec

Can AI Replace Human Respondents In Qualitative Research?

jerry9789 0 comments artificial intelligence, Brand Surveys and Testing, Brandview World, Burning Questions

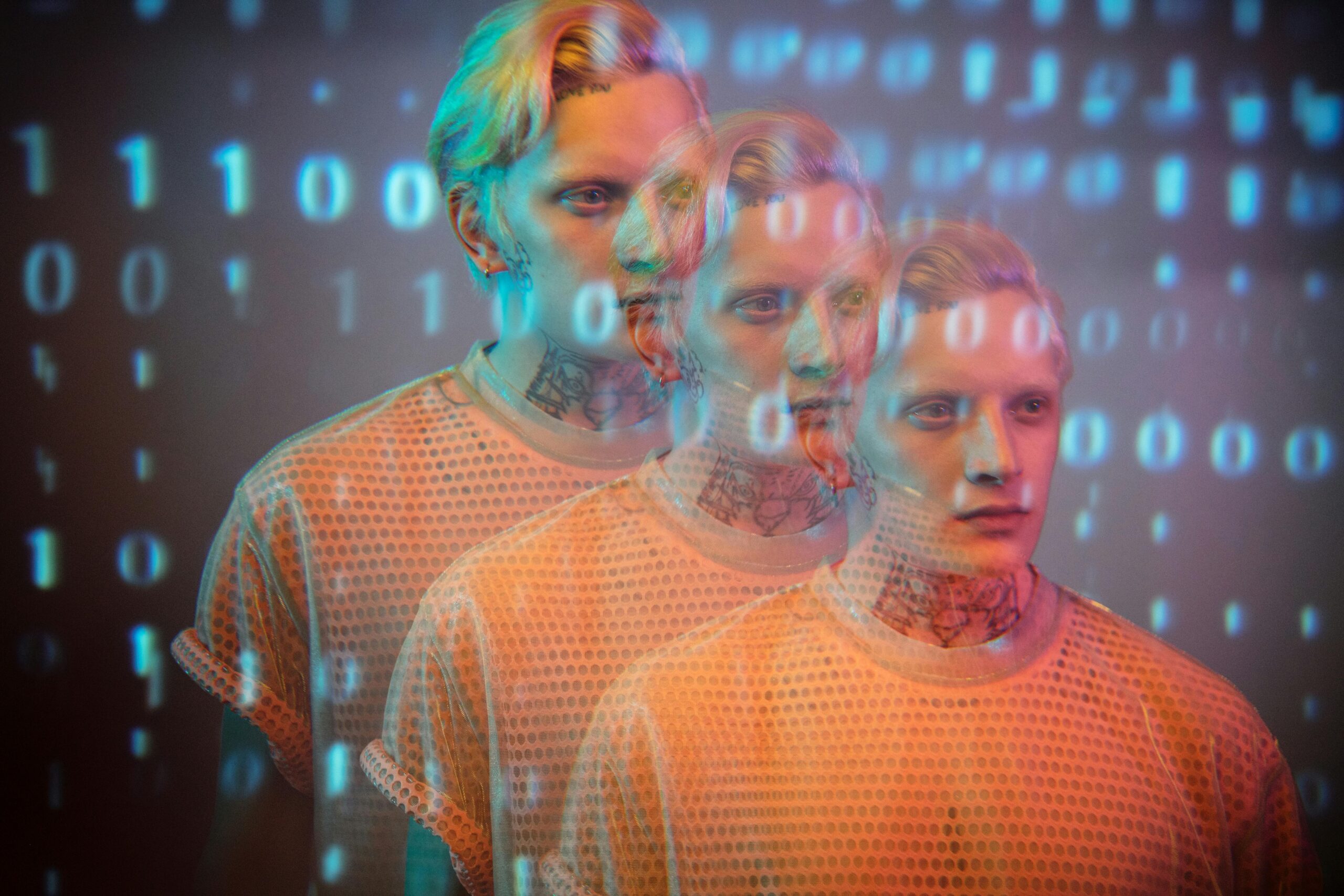

Like most industries these days, market research is no stranger to AI with its broad applications including the employment of synthetic respondents, which are individual profiles constructed by Large Language Models (LLMs) from real or simulated data. They offer fast, cheap, and scalable synthetic data that closely mimics how human participants would respond, a boon for quantitative research. But can synthetic respondents be just as effective in qualitative research? Can AI-powered profiles fully take over the role of human respondents in market research?

Image: Diana

Synthetic Respondents and Qualitative Research

L&E Research recently hosted a webinar sharing their findings and observations testing synthetic respondents across a variety of qualitative research tasks. They shared that AI characteristically produces quick, structured, and consistent surface-level insights. It does well with detecting macro trends in usage or preferences, concept screening if you need to compare multiple ideas at scale, and spot issues with survey testing. It is also capable of gap-filling or simulating missing segments from known data, as well as bulk analysis for summarizing large open-ends quickly.

The key takeaway L&E found is that AI can describe what people do, but it falls short of telling why people do it. AI fundamentally excels in following patterns, but it would struggle with finding out the emotional driver, the motivation behind certain responses. AI can match logic but it won’t be able to fill in tone, nuance nor context like human insight and experience can.

Most AI models are also built on public data and may not have access to knowing how real people would respond to certain questions. When the engineers tried to influence AI agents in the direction of how real participants would respond, it rejected this notion and firmly stood by the perspective formed from the vastness of public data.

Additionally, AI can be absolutely and confidently wrong. Synthetic data can look convincingly human but since AI relies on patterns instead of experience, the air of confidence it puts up doesn’t guarantee accuracy.

Of course, the hosts added a disclaimer that this is where synthetic respondents are at right now, as no one could tell how things could possibly be so much different in the years to come. But the continued utilization of AI in market research- or any other industry, for that matter- is inevitable thanks to the operational and executionary efficiency it grants, and that is enough reason to continue studying and developing synthetic respondents.

Image: Ron Lach

Why The Human Factor Matters

In market research, emotions matter and context counts. AI can prove to be a powerful partner but it is no replacement for lived insight or validation. Human researchers are simply going to remain essential.

AI’s inherent structure and consistency is representative of its pursuit of perfection; however, humans aren’t perfect, nor simple. Humans are emotional and oftentimes, irrational. AI participants would respond based on their perfect approximation of how a human being would, but the synthetic logic behind that would be narrower and more consistent, as it discounts the fact that humans are imperfect.

Humans also bring incredible complexity and a broader range of perception to the table. We can contradict ourselves, and this would be natural. One human participant’s perception and experiences could inform the difference in how they respond from the next, while synthetic data would be uniformly shaped by congruence and invariability, no matter how much effort or work is put into making AI come close to mimicking humanlike responses.

The complexity, variability, and randomness of human nature is desirable in qualitative research. The engineers recognized this and cautioned about overly guiding or influencing randomness in AI that it “will hard-code your picture of randomness to the point where it is no longer random.”

AI can quickly give you bulk analysis but you might not want to rush in bringing it to your stakeholders, as they would question and challenge the quality and reliability of synthetic data. Human insight continues to be vital and irreplaceable when it comes to trust, nuance, and real-world complexity in market research.

Image: Kathrine Birch

The Hybrid Approach

At the end of it all, the hosts made a point that the webinar wasn’t meant to scare people away from synthetic data but rather bring a valid conversation on when it makes sense to take advantage or steer clear of AI-generated personas. In fact, they recommended utilizing a hybrid approach of employing virtual respondents and recruiting human participants, striking a delicate balance between synthesis and empathy.

Synthetic data would be great during the early exploratory stages of market research when you want to get an initial pulse check, something quick and good enough before getting people involved. But once you’re at the point when you need to uncover the emotional driver behind responses and decisions, understand or predict behaviors, or even gain a bit more confidence and trust in your findings, that’s when you bring in your human respondents.

This all aligns not only with a recent growing trend of companies coming around from the AI hype of the last few years but also with our stance on the appropriate use of AI, where we advocate for the responsible and ethical use of artificial intelligence. Instead of handing AI complete reins over all aspects of a business- or in this case, all stages of research work- we at Cascade Strategies encourage the thoughtful and practical application of artificial intelligence in combination with or enhanced by human experience, values and discretion.

To find out how our brand of inspired and enlightened human thinking can help you with your market research needs, please contact us here.

Additional Reading:

Can Synthetic Respondents Take Over Surveys?

Featured Image: Darlene Anderson

Top Image: Michelangelo Buonarroti

Nov

Are We Seeing The Start Of The AI Pullback?

jerry9789 0 comments artificial intelligence, Burning Questions

What Is AI Pullback?

In these last few years, we’ve all heard nothing but the revolutionary and transformative influence of Artificial Intelligence not only in the mainstream consciousness but also in various industries. A mixture of excitement and anxiety, we’ve collectively marveled at what Generative AI could produce or emulate in little to no time at all while grasping at the notion of what all this automation means for the human workforce and talent. However, the tone appears to be shifting these past few months with data showing large companies’ adoption of AI on the decline.

As reported in Apollo, a biweekly US Census Bureau survey of 1.2 million firms revealed a downward trend in AI utilization for companies with more than 250 employees. Falling from about 13.5% in June to under 12% in August, it’s the largest decline for AI adoption since the survey started in November 2023. Mid-sized companies or firms with less than 250 employees but more than 19 workers showed decreasing or stagnating AI adoption. It’s only with small companies with less than four employees that demonstrated a slight increase in AI usage.

New reports might help shed light on the AI pullback, such as a recent one from MIT indicating that 95% of AI pilot programs failed to boost company revenues or productivity. MIT’s findings were based on reviews of over 300 public corporate AI usage, surveying 350 employees, and talking with 150 industry leaders.

A recent study by METR revealed that developers surprisingly took 19% longer to complete issues when using AI coding tools than without. Yet despite actually experiencing slowdown, the developers still believed AI sped them up by 20%. This gap between developers’ perception and reality is representative of these past few years with the hyped up implementation of AI into everything software-related and unrestrained confidence in the hot new tech in spite of the unfavorable results.

IT Consultancy Gartner also attempted to quantify how much work AI agents get wrong when it “hallucinates.” They found that generative AI performs office tasks wrong a staggering 70% of the time. With that much error, human oversight becomes a necessity and in some cases, objectives would’ve been served much better had the task been assigned to a person instead of a machine.

Image: Mathias Reding

Is AI Pullback A Sign Of AI Adoption Maturity?

On a different note, the MTLC wrote that the AI pullback might seem like a slowdown but it could actually be a correction or calibration, as is the natural progression with any emerging tech’s usage. They pointed out that the same MIT report accounted for over 90% of employees using AI tools, no matter the company stance with AI. That these workers would utilize AI just so they can perform their tasks more efficiently and faster. And that this is another way at looking at AI adoption- “bottom-up, not just top down.”

It’s not that AI isn’t viable, but rather “the projects weren’t scoped, aligned, or designed for outcomes.” 95% of AI projects fail not because of the technology but because of how companies approach AI adoption.

AI pullback is signaling the transition from overenthusiasm to realistic expectations, from experimentation to production. For enterprise businesses to succeed with AI, they would need to identify which models, tools and processes work, which projects to invest in, and where AI would deliver the most value.

Image: Andrea Piacquadio

Cascade Strategies’ Approach To AI

The promise of increased production and revenue had led to companies to replace or cease hiring human workers in favor of AI during the onset of its mainstream popularity. Now that AI pullback is happening, companies have begun hiring human workers again not only to oversee but fix or improve sloppy AI outputs.

Cascade Strategies has always approached AI not just merely as a tool but as an augmentation and extension of human intelligence and talent. Yes, AI is a very powerful and promising technology but we recognize early on that on its own, it is gravely limited to the datasets its fed and their quality, the eventual gaps providing the perfect breeding ground for “hallucinations,” each iteration degrading and becoming less of what was before. We’ve always seen human intervention and guidance as essential for AI to maximize its potential; with the AI pullback, more and more companies are on the brink of discovering this incredible synergy between human and artificial intelligence.

Powered by AI, research becomes scalable and cost-effective by being applicable in all facets of the business and not just flagship projects; human oversight amplifies all that productivity and efficiency by unlocking innovative, resonant and actionable insights.

If you would like to learn more about how our human-centered market research work can benefit you or your company, feel free to contact us here.

Image: cottonbro studio

Additional Reading:

AI Pullback Has Officially Started – Will Lockett, medium.com Oct. 22, 2025

Data Shows That AI Use Is Now Declining at Large Companies – Joe Wilkins, futurism.com Sep. 8, 2025

AI adoption slows among big firms, U.S. data shows – Jullianna Anne Briones, tech.co Sep. 18, 2025

US Census Bureau: AI Adoption Has Declined for Large Companies – Conor Cawley, Sep. 11, 2025

AI adoption rate is declining among large companies — US Census Bureau claims fewer businesses are using AI tools – Hassam Nasir, tomshardware.com Sep. 8, 2025

Featured Image: MrWashingt0n

Top Image: Thirdman

Oct

A Human Center Makes Market Research All The More Powerful

jerry9789 0 comments artificial intelligence, Brand Surveys and Testing, Brandview World

The Future Of Research Is Here

You’ve seen it and there’s no denying it. Industries have been reshaped by the increasing utilization of Artificial Intelligence just in the last few years alone. Promising and delivering speed and optimization at the fraction of the costs and resources, it’s powerful, revolutionary and exciting. And as with any emerging technology, it comes with its own set of anxieties.

In line with its growing popularity and adoption, people in different industries have been expressing nervousness over being replaced in their jobs by AI. Certain repetitive, data-driven tasks are at the greatest risk of being supplanted by AI. However, AI also opens up opportunities to shift focus and upskill the more complex and creativity-driven facets of work roles, creating new jobs or augmenting existing ones.

The research industry is just as impacted by AI’s progressive application. It’s naive to assume that researchers would be replaced wholesale by AI, but there’s more to delivering research results than just gathering and crunching data.

Image: Circe Deyer

Our take on the integration of AI into research

We’ve always maintained that AI is a good advisor, but it’s a poor decision-maker. We’d like to modify that by saying it’s an even worse storyteller, if at all.

Cascade Strategies has been in the market research industry for over three decades now, serving some of the biggest local and international companies. You can say we’ve seen it all in this industry, but we’re just as fascinated as everyone else by the mainstream popularity of AI in the past few years. We’ve applied it in our methodologies, been impressed by its operational benefits and how it changed industries, but in the end, we know truthfully that it is not the end-all, be-all for research work. We believe that AI would serve us better by being a powerful extension of human judgment, creativity, and insight.

AI can be fed large datasets to approximate human thinking, but we believe it can never replicate human perspicacity, the kind of intelligence honed and guided by human values and experience. Take a look at our Expedia Group Case Study where we’ve utilized AI to generate multiple revenue-granting scenarios, then tempered the decision-making process by applying high-level human thinking to craft messaging that resonates with the end-user.

AI-driven research can produce results based on what has come before, but it can never uncover the truly novel, meaningful and resonant insights high-level human thinking unlocks. These are the insights that empower big and sweeping decisions. Data-based results from AI would seem lifeless and unrelatable. But if they are imbued with human interpretation, that output elevates into a masterful narrative that sparks imagination, questions boundaries, and transforms perspectives.

Image: geralt

Featured Image: mohamed mahmoud hassan

Top Image: geralt

Oct

AT&T allowed us to conduct qualitative and quantitative research for them. The result was a key brand insight about the Worry Wort, a kind of subscriber who preferred AT&T over rivals Verizon and T-Mobile for a variety of reasons and tended to stick with AT&T for the long haul. The campaigns built around the Worry Wort allowed AT&T to reduce churn and fend off wireless competitors.

It’s doubtful that submitting the same data to AI would produce a finding as incisive as the Worry Wort. This is something to bear in mind if you’re a telecommunications brand seeking to thrive: human perspicacity counts.

There’s a kind of intelligence AI can’t reach. It has dimension, soul, and human inspiration. In the telecommunications business, we’d do well to remember this as we pour more datasets into the maw of AI. If you’re in the telecommmunications business and need human perspicacity, you might call Cascade Strategies. We can help you see things AI can’t see.

Featured Image: (Public Domain)

Top Image: Brownings at English Wikipedia

Sep

Pan Pacific Hotels allowed us to conduct qualitative and quantitative research for them. The result was a key brand insight about the Cosmopolite, a kind of guest who preferred Pan Pacific lodging even when other hotel offers were better. The campaigns built around the cosmopolite allowed Pan Pacific Hotels to weather economic downturns and pandemics, and even expand into key markets in Asia.

It’s doubtful that submitting the same data to AI would produce a finding as incisive as the Cosmopolite. This is something to bear in mind if you’re a hospitality brand seeking to thrive: human perspicacity counts.

There’s a kind of intelligence AI can’t reach. It has dimension, soul, and human inspiration. In the hospitality business, we’d do well to remember this as we pour more datasets into the maw of AI. If you’re in the hospitality business and need human perspicacity, you might call Cascade Strategies. We can help you see things AI can’t see.